Here is a generic version of a conversation I’ve had a few too many times:

Person 1: “Hey look what I found! My favourite theory is consistent with this correlation. This thing is positively related to that thing.”

Person 2: “Good for you! Correlation doesn’t imply causation, though, right?”

Person 1: “Of course not, don’t be silly. But at least it shows that this thing is not negatively causally related to that thing.”

Person 2: “Ah, good point.”

Me: “Wait a minute…“

The by now tired meme “correlation does not imply causation” is perhaps the most important and also generally well-known rule of thumb for understanding observational data. Ridiculous examples of striking correlations underscore the importance of making this crucial distinction, ranging from cases of drowning caused by Nicholas Cage movies to space launches caused by sociology doctorates (check out this!).

As we know, the logic is simply this: two things occuring together doesn’t mean that one causes the other. It’s entirely possible that they are completely unrelated, causally speaking, even when correlations are extremely high and statistically significant. Put simply, both things may be caused by some third event that we didn’t think about or observe. Most people are able to grasp this fairly easily and mistaking correlation for causation quickly (and rightfully) attracts ridicule.

However, there is a related argument that pops up occassionally – even from scientists, and sometimes in scientific publications – that is actually equally wrong. This regards making inferences from the sign of a correlation to at least rule out the opposite sign of an underlying causal relationship.

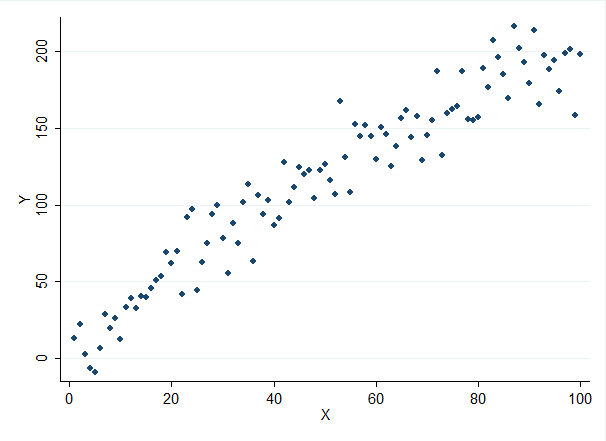

Consider the following case. First, we observe a strong positive correlation between religiosity and violence such that the more religious a society is, the higher the incidence of different types of violence that occurs. By now we know that we can’t just say that religion causes violence based on this. An alternative explanation may be that poverty causes both religiosity and violence. But in this case, some would apparently find it tempting to say that sure, this doesn’t prove that religion causes more violence, but at least we can say that religion doesn’t cause less violence. Feels appealing, doesn’t it? Wrong. And wrong for the very same reason that the first conclusion is also wrong. A positive correlation between religion and violence is STILL entirely consistent with a negative causal relationship such that religion causes less violence.

Let’s consider another example to illuminate this. We have a hypothesis that substance X, that occurs naturally in different plants, causes increased IQ. To test this, we launch a large observational study where we ask about consumption of X-containing foods and administer an IQ test. To our horror, we find that people who consume large amounts of X-containing foods are actually substantially less intelligent than those who consume very little. Too bad! According to the logic above, we could then rule out the hypothesized positive effect of substance X and move on to some other research project.

But hold your horses! Suddenly, we realize that actually, the most common way of ingesting X in this sample was through peanuts! When do people eat peanuts? When they drink beer! Consequently, here is another story: high beer consumption causes high X-consumption via peanuts, and also causes lower IQ. The negative effect of beer is simply larger than the positive effect of X. Our premature ruling out of a positive effect of X was just as methodologically flawed as inferring a negative effect of X. A negative correlation does not rule out a positive causal relationship, and more generally, the sign of a correlation is just as irrelevant to the question of causality as the existence of that correlation to begin with.

In this ficticious example, we could then attempt to do various types of statistical controls, and sure, multiple regression etc can help safeguard against some confounding factors. But the underlying problem remains: we may not be able to know, or measure, every conceivable confounder. Our best bet, absent the option of running an experiment, is to identify some exogenous variation that could help us infer causality.

Humans and probably most animals naturally see coincidence as causality. We are pattern recognition beings and these patterns tell us how to survive. That is why we jump to conclusions when we find that two data sets correlate. If a correlation persists (using split data sets) then one may rightly conclude that there is a causal relationship between them or that they are both related to yet another data set. Regression analysis does this and is widely used and misused.

If a correlation is strongly positive then it indicates that the two data sets are moving up and down together. If negative, it means they are moving oppositely to each other.

I had a personal relationship with this human “coincidence as causality” experience when I was walking down the hall of a building at night and stepped on a piece of yellow tape that was stuck across my path. When I stepped on the yellow tape the lights in the hall went out. When I took my foot off the tape the lights went back on. I was then convinced that the tape covered some sort of pressure sensor that turned the lights on and off. I then slowly put my foot closer and closer to the tape to find out how sensitive it was when the janitor peeked around the corner and said, “Oh, there is someone here, sorry, I will leave the lights on.”

LikeLike